Get Started Today

For Consumer & Enterprise

For Consumer & Enterprise

For Enterprise

XR/AR Headset

Attach the Leap Motion Controller 2 to a XR or AR headset. Play games & applications using just your hands. No controllers needed.

Leap Motion Controller 2

USB cable included

XR Universal Headset Mount

XR Headset*

PC-Headset link cable included

PC/Mac

Connect the Leap Motion Controller 2 to your Windows, Mac, or Linux PC. Control applications on your computer just by gesturing in the air with your hands.

Leap Motion Controller 2

USB cable included

PC/Mac Computer*

Public Displays

Mount the Ultraleap 3Di to any public screen or plinth to control your display. Control your on-screen application by gesturing in the air with your hands.

Ultraleap 3Di

USB cable included

Display*

BrightSign Box or PC*

For Consumer & Enterprise

XR/AR Headset

Attach the Leap Motion Controller 2 to a XR or AR headset. Play games & applications using just your hands. No controllers needed.

Leap Motion Controller 2

USB cable included

XR Universal Headset Mount

XR Headset*

PC-Headset link cable included

For Consumer & Enterprise

PC/Mac

Connect the Leap Motion Controller 2 to your Windows, Mac, or Linux PC. Control applications on your computer just by gesturing in the air with your hands.

Leap Motion Controller 2

USB cable included

PC/Mac Computer*

For Enterprise

Public Displays

Mount the Ultraleap 3Di to any public screen or plinth to control your display. Control your on-screen application by gesturing in the air with your hands.

Ultraleap 3Di

USB cable included

Display*

BrightSign Box or PC*

Introducing

Ultraleap Widgets

Introducing Ultraleap Widgets

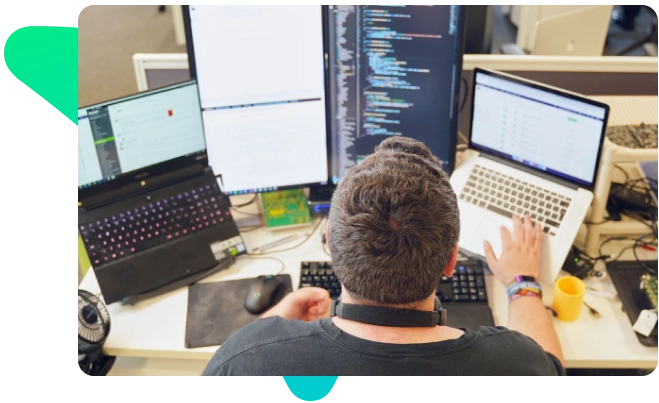

Ultraleap widgets showcase the potential of the worlds best hand tracking on your computer through small desktop apps.

Each widget explores a gestural input to control your computer around the home or the office. Come join us as we explore what your hands can do!

For XR Developers

Technical Support

Technical Support

you need? The Ultraleap support team are here to help –

or you can ask our community by joining our Discord.